- From: Orie Steele <orie@transmute.industries>

- Date: Fri, 8 Sep 2023 17:38:47 -0500

- To: Nate Otto <nate@ottonomy.net>

- Cc: "W3C Credentials CG (Public List)" <public-credentials@w3.org>

- Message-ID: <CAN8C-_JvasfskizPT+RBw0aTTHhKdCO31azi_j_pdTfxEUZTkQ@mail.gmail.com>

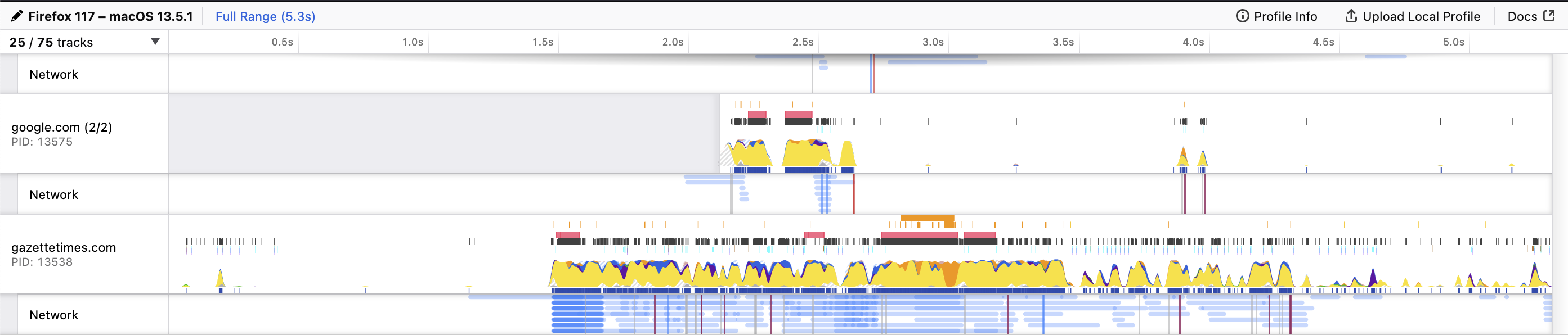

> I'd expect that any of the cryptographic operations involved in operating on a credential would be a lot smaller than what's involved in loading a webpage. You would think that : ) But many selective disclosure schemes need to apply multiple signatures or hash operations to complicated data structures, in order to allow the data subject / or controller to share a claim with authenticity and integrity without disclosing others. If you profile those operations, you will see the following trends: 1. hash functions matter a lot... sha256 vs sha384 vs sha512... when they get applied to every message, can get expensive. 2. how much work does it take to get to a set of messages? compare RDF Data Set Canonicalization with hmac blinding to JSON Pointer dict operation with key blinding... 3. digital signature are also expensive, P384 vs Dilithium5, etc... Some of these operations are "application layer pre-processing" others are "crypto layer" and you probably can't touch them or make them faster without altering security... https://research.redhat.com/blog/article/the-need-for-constant-time-cryptography Right there you can see that optimization might be only possible in some layers of a verifiable credential.... for example, the c implementation of rdf canonicalization allocates memory to be able to speed up the graph operations, https://github.com/digitalbazaar/rdf-canonize-native/blob/master/benchmark/README.md This is a good example of a benchmark that matters to application pre-processing. In order to see the full cost of crypto suites built on top of this, you have to consider what happens to the data next, do you sign each n-quad? or the hash of all of them? What about a system that didn't require heavy application preprocessing before sign and verify? Now the first thing that happens is the signature is checked, and if it fails, no expensive graph operation happens because the data might have been tampered with. This also lines up with web applications security best practices, https://owasp.org/www-community/vulnerabilities/Deserialization_of_untrusted_data It's best not to mess with data before you have validated / verified it... if it's possible to do that. To be clear, deserialization attacks can be exploited in JSON or YAML, without doing RDF / JSON-LD stuff... Its best to apply defense in depth, check signatures, input validation, then maybe go for the heavier data processing operations as you have higher confidence in the content you are handling... and this also lines up with building less energy intensive security products, because you don't do expensive operations until its safe to do them and you need to do them. Regards, OS On Fri, Sep 8, 2023 at 4:23 PM Nate Otto <nate@ottonomy.net> wrote: > This kind of seems to me like barking up an unnecessary tree, but maybe as > part of making a case for this type of analysis, a point of comparison > could be made against a process that is a little more tangible for a human. > Instead of simply comparing the compute between the cryptographic > operations for different flavors of credential verification, perhaps you > could also compare to the amount of compute needed to do an everyday task > like load a webpage for a news article from a local newspaper website with > no ad-blocker enabled. > > I am not quite an expert at reading the type of chart that comes out of > the profiling tools in the browser, but there's a heck of a lot of compute > involved in doing the sort of operation that a user might do hundreds of > times in a day. I'd expect that any of the cryptographic operations > involved in operating on a credential would be a lot smaller than what's > involved in loading a webpage. I tried it out real quick, and it took about > 3 seconds of pretty brightly lit up CPU before the page settled down and I > could start reading an article. > > [image: Screenshot 2023-09-08 at 2.13.26 PM.png] > > *Nate Otto* > nate@ottonomy.net > Founder, Skybridge Skills > he/him/his > -- ORIE STEELE Chief Technology Officer www.transmute.industries <https://transmute.industries>

Attachments

- image/png attachment: Screenshot_2023-09-08_at_2.13.26_PM.png

Received on Friday, 8 September 2023 22:39:06 UTC