- From: Brad Hill <hillbrad@gmail.com>

- Date: Tue, 15 Sep 2015 22:42:17 +0000

- To: Henry Story <henry.story@co-operating.systems>, Tony Arcieri <bascule@gmail.com>

- Cc: Rigo Wenning <rigo@w3.org>, "public-web-security@w3.org" <public-web-security@w3.org>, "Mike O'Neill" <michael.oneill@baycloud.com>, Anders Rundgren <anders.rundgren.net@gmail.com>, public-webappsec@w3.org

- Message-ID: <CAEeYn8hPtv_UKEDBBzBYbNYKK=NMM--QYbV4GcvqFANmzHCbjg@mail.gmail.com>

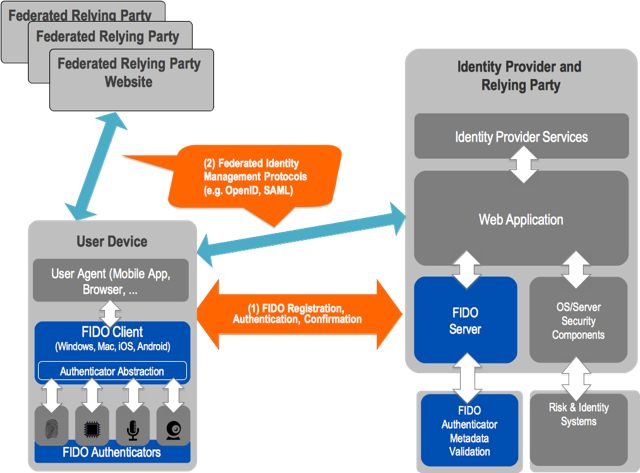

FIDO is not "like a cookie". Cookies are about session and state management. FIDO replaces passwords or certificates to provide strong authentication, and it does so in a way that is consistent with the SOP architecture of the web, so that users can be in control of how and to whom they authenticate without having to make difficult, confusing and consequential decisions about their security and privacy. It just works, and it's safe. The diagrams you point to show how FIDO can be a secure initial-authentication step to an entity that can provide identity assertions or claims in a federated manner through existing protocols that also build on top of and work within the web and the SOP. These kinds of systems are already successfully deployed in many contexts, for many years, with users in the billions. Decoupling authentication from identity, and building both over and in concert with the core security and privacy architecture of the web, supports user choice and permissionless innovation. Meanwhile, in the <keygen> + x.509 + TLS client cert world, the privacy-oblivious, inextricable conflation of identity and authentication makes intrusive user experiences mandatory. These experiences are inconsistent, have barely evolved in 20 years, and there is no evidence that users actually understand the consequences of the decisions they are being asked to make. User Choice as a principle doesn't mean that we should ask the user to make choices they don't understand or which can harm them. ActiveX popped up plenty of "opportunities for choice" and gave me the "choice" to run arbitrary code in the course of ordinary web activities, but it was fundamentally user-hostile because it enabled designs that forced the user to accept risk and harm in order to access services on the web. The SOP is exactly about enabling user choice to freely browse the web and interact with services without having to be concerned about certain classes of harm. It's not perfect, but it's the best we've come up with, and the more consistent we are about it, the better it works. <keygen> entangles being identified with being authenticated, locks the experiences and evolution of the direct relationships between users and services to the inconsistent and slow moving world of browser UI, violates the SOP and forces the cost of that damage onto the user, and it puts authentication at a layer (the TLS handshake) where it is fundamentally problematic to the commonplace scalability and performance architecture of anything but hobbyist-level applications. That's why it's being deprecated. On Tue, Sep 15, 2015 at 2:29 PM Henry Story <henry.story@co-operating.systems> wrote: > On 15 Sep 2015, at 21:14, Tony Arcieri <bascule@gmail.com> wrote: > > On Mon, Sep 14, 2015 at 10:08 AM, Rigo Wenning <rigo@w3.org> wrote: > >> The same argumentation has already be used during the rechartering of the >> WebCrypto Group. The privacy argument used by people from one of the >> largest origins is funny at best. If I use my token with A and I use my >> token with B, A and B have to communicate to find out that I used them both. >> > > Speaking as someone who attended WebCrypto Next Steps, the common theme to > me was actually a fundamental incompatibility between PKCS#11 APIs and how > web browsers operate. Many talks alluded to some sort of "bridge" or > "gateway" or "missing puzzle piece" to connect the Web to PKCS#11 hardware > tokens. Unfortunately there were no concrete proposals from either a > technical or UX perspective. It was mostly a dream from all of the vendors, > realized in slightly different vague handwavy visions, of how someone could > swoop in and magically solve this problem for everyone. Clearly dreams > without actual technical proposals didn't go anywhere. > > The reality is the SOP is the foundational security principle of the web. > Period. Introducing SOP violations is a great way to ensure browser vendors > don't adopt proposals. > > > SOP is a technical Principle, which is trumped by the legal principle of > User Control. I made this point in the thread to the TAG > https://lists.w3.org/Archives/Public/www-tag/2015Sep/0038.html > and it fits the TAG finding on Unsactioned Tracking > http://www.w3.org/2001/tag/doc/unsanctioned-tracking/ > > Current browsers respect user control with regard to certificates - some > better than others. It can be improved, but that can best be done through > legal and political pressure. > > If the user is asked if she wants to authenticate with a global ID to a > web site, then that is her prerogative. > As long as she can select the identity she wishes to use, and change > identity when she wants to, or become anonymous: she must be in control. > > Privacy is actually improved in distributed yet connected services. By > having distributed co-operating organisation each in control of their > information, each can retain their autonomy. As an example it should be > quite obvious the the police cannot share their files with those of the > major social networks, nor would those have time to build the tools for the > health industry, nor would those work for universities, etc. etc... The > world is a sea of independent agencies that need to look after their own > data, and share it with others when needed. In order to share the data, > they need to give other agents access, they need to *control* access, which > means you need some form of global identities that can be as weak as > temporary pseudonyms, or stronger. In fact they can evolve out of > pseudonyms into strong identifiers on which a reputation has built itself. > > Of course anonymous or one site identities should be the basis of that and > FIDO provides that, but not more. > On top of FIDO you can see OpenID, SAML and OAuth profiling themselves > very clearly in the FIDO UAF Architectural Overview: > > > https://fidoalliance.org/wp-content/uploads/html/fido-uaf-overview-v1.0-ps-20141208.html#relationship-to-other-technologies > > These global identities based on URIs won't go away in a Web that is a web > of billions of organisations, individuals and things, connected and > interconnected. > > > Going back to your original point though, what you're describing is of > course extremely commonplace on the web. Web sites often leverage multiple > advertising and analytics networks, so "A and B communicating" (perhaps > vicariously via ad or analytic network C) is so exceedingly commonplace I'm > not sure what you're even suggesting. This already happens practically > everywhere all the time, to many commonly shared third parties who very > much want stable identifiers to link users. > > There are of course ample other signals by which ad and analytics networks > can track people with (IP addresses and "supercookies" certainly come to > mind), but by brushing it off, you're actually suggesting that > cryptographic traceability should be built into the fundamental > cryptographic architecture of the Web. This is a slippery slope argument, > i.e. things are bad, so why not make them worse? > > There's no going back from that, short of throwing it all away (as what is > likely to happen to the <keygen> tag soon) and starting over from scratch > (ala FIDO). > > > Here is the picture from the architectural UAF document mentioned above. > (It fails to mention that in many cases after an OAuth or OpenID the > Relying Party communicates with the Federation Party until recently called > the identity provider.) So really FIDO is just setting a super strong > cookie with some cryptographic properties which then more and more often > needs to be bolted onto actual Identity Providers. All of this relies on > server side cryptographic keys tied to TLS, so that the major parties are > in effect using certificates for global authentication. > > > ( They could have also have added WebID to the mix http://webid.info/ but > that is anoyingly simple, which is why I like it :-) > > Now WebID is not tied logically to TLS [1]. We have some interesting > prototypes that show how WebID could work with pure HTTP. > • Andrei Sambra's first sketch with > https://github.com/solid/solid-spec#webid-rsa > • Manu Sporny's more fully fleshed out HTTP Message signature > https://tools.ietf.org/html/draft-cavage-http-signatures-04 > these two could be improved and merged. > > This would allow a move to use strong identity in HTTP/2.0 ( SPDY ) , > which could then be supported by the browsers who could build User > Interfaces that give the users control of their identity with user > interfaces described by your Credentials Management document developed here > > https://w3c.github.io/webappsec/specs/credentialmanagement/#user-mediated-selection > > Funnily enough one should already be able to try Andrei or Manu's protocol > in the Browser with JS, WebCrypto, and ServiceWorkers [2]. There are many > things to explore here [3] and the technology is still very new. [4] > > But this shows that WebCrypto actually allows one to do authentication > across origins. ( And how could > it not, since it is a set of low level crypto primitives? ). A Single > Page application from one site can publish > a public key and authenticate to any other site with that key. > > So its a bit weird that SOP is invoked to remove functionality that puts > the user in control in the browser, > and that WebCrypto is then touted simultaneously as the answer, when in > fact WebCrypto allows Single Page Applications (SPA) to do authenticate > across Origins, and do what the browser will no longer be able to do. > Lets make sure the browser can also have an identity. It's an application > after all! > > Let's stop using SOP as a way to shut down intelligent conversation. Let's > think about user control as the aim. > > Henry > > > [1] as it may seem from the TLS-spec which we developed first because it > actually worked. > http://www.w3.org/2005/Incubator/webid/spec/ > [2] http://www.html5rocks.com/en/tutorials/service-worker/introduction/ > [3] https://lists.w3.org/Archives/Public/www-tag/2015Sep/0051.html > [4] which is why it is wrong to remove 15 year old <keygen> technology on > which a lot of people depend > around the world as explained so well by Dirk Willem Van Gulik on the > blink thread before a > successor is actually verified to be working. > See Dirk's messages here: > > https://groups.google.com/a/chromium.org/d/msg/blink-dev/pX5NbX0Xack/GnxmmtxSAgAJ > > > https://groups.google.com/a/chromium.org/d/msg/blink-dev/pX5NbX0Xack/4kKNMVCdAgAJ > Also note that Japan is moving to eID > http://www.securitydocumentworld.com/article-details/i/12298/ > that sweden has a huge number of people there, and that it might not be > too good to get noticed by > states: Russia and the EU just this month started anti trust > investigations against Google for example. > > > > -- > Tony Arcieri > >

Attachments

- image/png attachment: PastedGraphic-1.png

Received on Tuesday, 15 September 2015 22:42:56 UTC