- From: Martin Thomson <martin.thomson@gmail.com>

- Date: Tue, 13 Nov 2012 14:33:48 -0800

- To: Adam Bergkvist <adam.bergkvist@ericsson.com>

- Cc: "public-webrtc@w3.org" <public-webrtc@w3.org>, "public-media-capture@w3.org" <public-media-capture@w3.org>

- Message-ID: <CABkgnnXE3Fnu_C=k_cRkzdUYHMptm3BrdHfZ4CUa5fqOu9fxFQ@mail.gmail.com>

I've been doing some thinking about this problem and I think that I agree

with Harald in many respects. The interaction between the different

instances becomes unclear. At least with a composition style API, this

would be clearer.

I have an alternative solution to Harald's underlying problems. The

inheritance thing turned out to be superficial only. I have come to

believe that the source of confusion is the lack of a distinction between

the source of a stream and the stream itself.

I think that a clear elucidation of the model could be helpful, to start.

--

Cameras and microphones are instances of media sources. RTCPeerConnection

is a different type of media source.

Streams (used here as a synonym for MediaStreamTrack) represent a reduction

of the current operating mode of the source. For example, a camera might

produce a 1080p capture natively that is down-sampled to produce a 720p

stream. Constraints select an operating mode, settings filter the

resulting output to match the requested form.

Streams are inactive unless attached to a sink. Sinks include <video> and

<audio> tags; RTCPeerConnection; or recording and sampling.

Sources can produce multiple streams simultaneously. Simultaneous streams

require compatible camera modes. A camera that is capable of operating in

16:9 or 4:3 modes might be incapable of producing streams in both those

aspect ratios simultaneously.

The first stream created for a given source sets the operating mode of the

source. Subsequent streams can only be added if the operating mode is

compatible with the current mode.

The same stream/track can be added to multiple MediaStream instances. The

conclusion thus far is that a stream is implicitly cloned by doing so.

Because the stream has the same configuration (constraints/settings), this

is trivially possible. This allows streams to be independently ended or

configured (with constraints/settings).

The first problem is that identification of streams is troublesome. The

assumption thus far is that the cloned stream shares the same identity as

its prototype. This is because the identifier in question is an identifier

for the *source* and not the stream. We should fix that.

This implies that MediaStreamTrack::id should actually be

MediaStreamTrack::sourceId, as it is currently used, though the interaction

with constraints are unclear.

A better solution would be to have MediaStreamTrack::source and

MediaStreamTrack::id. Where MediaStreamTrack:: source is clearly

identified and can be used to correlate different streams from the same

basic source. MediaStreamTrack::id allows two streams from the same source

to be distinguished when they have different constraints, which might be

useful for cases like simulcast.

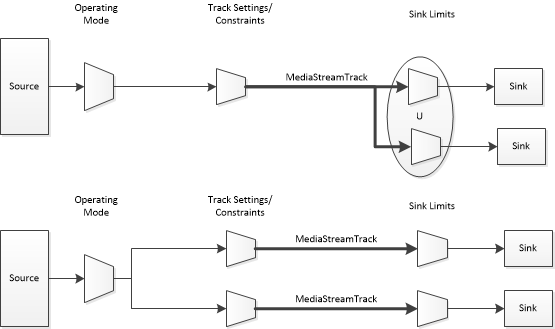

Source -- (Mode) -- (Settings) ------------- (Sink Limits) -- Sink

A stream is able to communicate information about its consumption by

sink(s) back to the originating source. (Real-time streams provide this

capability using RTCP; in-browser streams can use internal feedback

channels.) This allows sources to make choices about operating mode that

is optimized for actual uses. If your 1080p camera is only being displayed

or transmitted at 480p, it might choose to switch to a more power-efficient

mode as long as this remains true.

Information about how a stream is used can traverse the entire media path.

For instance, resizing a video sink down might propagate back so that the

source is only required to produce the lower resolution. Re-constraining

the stream might result in a change to the operating mode of the source.

Some sinks require unconstrained access to the source: sampling or

recording a stream would negate any optimizations that might otherwise be

possible.

Source (1) -- (0..*) MediaStreamTrack (..) -- (0..*) Sink

This arrangement is less than optimal when it comes to attachment of a

single stream to multiple sinks. If the same stream can be attached to

multiple sinks, the implicit constraints applied by those sinks are not

made visible in quite the same way. Any limits applied by a sink must

first be merged with those from other sinks on the same stream. More

importantly, it means that sinks cannot end their attached stream without

also affecting other users of the same stream.

Adam's proposal effectively creates this clone for RTCPeerConnection. The

stream used by RTCPeerConnection is a clone of the stream that it is given.

This addresses the concern for output to RTCPeerConnection, but it does

not address other uses (<audio> and <video> particularly).

URL.createObjectURL() seems like a candidate for this. I am coming to the

conclusion that createObjectURL() is no longer an entirely appropriate

style of API for this use case; direct assignment is better.

Source (1) -- (0..*) MediaStreamTrack (..) -- (0..1) Sink

[image: Inline images 2]

Now, after far too many words, on a largely tangential topic, back on

task...

I believe that composition APIs for stats and DTMF are more likely to be

successful than inheritance APIs. As it stands, going to your

RTCPeerConnection instance to get stats is ugly, but it is superior to what

Adam proposes.

What this proposal has over existing APIs is a much-needed measure of

transparency. I think that we need to continue to explore options like

this. I find the accrual of methods on RTCPeerConnection to be

problematic, not just from an engineering perspective, but from a usability

perspective.

For stats, a separate RTCStatisticsRecorder class would be much easier to

manage, even if it had to be created by RTCPeerConnection. That would be

consistent with the chosen direction on DTMF.

--Martin

On 9 November 2012 04:14, Adam Bergkvist <adam.bergkvist@ericsson.com>wrote:

> Hi

>

> A while back I sent out a proposal [1] on API additions to represent

> streams that are sent and received via a PeerConnection. The main goal was

> to have a natural API surface for the new functionality we're defining

> (e.g. Stats and DTMF). I didn't get any feedback on the list, but I did get

> some offline.

>

> I've updated the proposal to match v4 of Travis' settings proposal [2] and

> would like to run it via the list again.

>

> Summary of the main design goals:

> - Have a way to represent a stream instance (witch tracks) that are sent

> (or received) over a specific PeerConnection. Specifically, if the same

> stream is sent via several PeerConnection objects, the sent stream is

> represented by different "outbound streams" to provide fine grained control

> over the different transmissions.

>

> - Avoid cluttering PeerConnection with a lot of new API that really

> belongs on stream (and track) level but isn't applicable for the local only

> case. The representations of sent and received streams and tracks (inbound

> and outbound) provides the more precise API surface that we need for

> several of the APIs we're specifying right now as well as future APIs of

> the same kind.

>

> Here are the object structure (new objects are marked with *new*). Find

> examples below.

>

> AbstractMediaStream *new*

> |

> +- MediaStream

> | * WritableMediaStreamTrackList (audioTracks)

> | * WritableMediaStreamTrackList (videoTracks)

> |

> +- PeerConnectionMediaStream *new*

> // represents inbound and outbound streams (we could use

> // separate types if more flexibility is required)

> * MediaStreamTrackList (audioTracks)

> * MediaStreamTrackList (videoTracks)

>

> MediaStreamTrack

> |

> +- VideoStreamTrack

> | |

> | +- VideoDeviceTrack

> | | * PictureDevice

> | |

> | +- InboundVideoTrack *new*

> | | // inbound video stats

> | |

> | +- OutboundVideoTrack *new*

> | // control outgoing bandwidth, priority, ...

> | // outbound video stats

> | // enable/disable outgoing (?)

> |

> +- AudioStreamTrack

> |

> +- AudioDeviceTrack

> |

> +- InboundAudioStreamTrack *new*

> | // receive DTMF (?)

> | // inbound audio stats

> |

> +- OutboundAudioStreamTrack *new*

> // send DTMF

> // control outgoing bandwidth, priority, ...

> // outbound audio stats

> // enable/disable outgoing (?)

>

> === Examples ===

>

> // 1. ***** Send DTMF *****

>

> pc.addStream(stream);

> // ...

>

> var outboundStream = pc.localStreams.getStreamById(**stream.id<http://stream.id>

> );

> var outboundAudio = outboundStream.audioTracks[0]; // pending syntax

>

> if (outboundAudio.canSendDTMF)

> outboundAudio.insertTones("**123", ...);

>

>

> // 2. ***** Control outgoing media with constraints *****

>

> // the way of setting constraints in this example is based on Travis'

> // proposal (v4) combined with some points from Randell's bug 18561 [3]

>

> var speakerStream; // speaker audio and video

> var slidesStream; // video of slides

>

> pc.addStream(speakerStream);

> pc.addStream(slidesStream);

> // ...

>

> var outboundSpeakerStream = pc.localStreams

> .getStreamById(speakerStream.**id);

> var speakerAudio = outboundSpeakerStream.**audioTracks[0];

> var speakerVideo = outboundSpeakerStream.**videoTracks[0];

>

> speakerAudio.priority.request(**"very-high");

> speakerAudio.bitrate.request({ "min": 30, "max": 120,

> "thresholdToNotify": 10 });

> speakerAudio.bitrate.onchange = speakerAudioBitrateChanged;

> speakerAudio.**onconstraintserror = failureToComply;

>

> speakerVideo.priority.request(**"medium");

> speakerVideo.bitrate.request({ "min": 500, "max": 1000,

> "thresholdToNotify": 100 });

> speakerAudio.bitrate.onchange = speakerVideoBitrateChanged;

> speakerVideo.**onconstraintserror = failureToComply;

>

> var outboundSlidesStream = pc.localStreams

> .getStreamById(slidesStream.**id);

> var slidesVideo = outboundSlidesStream.**videoTracks[0];

>

> slidesVideo.priority.request("**high");

> slidesVideo.bitrate.request({ "min": 600, "max": 800,

> "thresholdToNotify": 50 });

> slidesVideo.bitrate.onchange = slidesVideoBitrateChanged;

> slidesVideo.onconstraintserror = failureToComply;

>

>

> // 3. ***** Enable/disable on outbound tracks *****

>

> // send same stream to two different peers

> pcA.addStream(stream);

> pcB.addStream(stream);

> // ...

>

> // retrieve the *different* outbound streams

> var streamToA = pcA.localStreams.**getStreamById(stream.id);

> var streamToB = pcB.localStreams.**getStreamById(stream.id);

>

> // disable video to A and disable audio to B

> streamToA.videoTracks[0].**enabled = false;

> streamToA.audioTracks[0].**enabled = false;

>

> ======

>

> Please comment and don't hesitate to ask if things are unclear.

>

> /Adam

>

> ----

> [1] http://lists.w3.org/Archives/**Public/public-webrtc/2012Sep/**

> 0025.html<http://lists.w3.org/Archives/Public/public-webrtc/2012Sep/0025.html>

> [2] http://dvcs.w3.org/hg/dap/raw-**file/tip/media-stream-capture/**

> proposals/SettingsAPI_**proposal_v4.html<http://dvcs.w3.org/hg/dap/raw-file/tip/media-stream-capture/proposals/SettingsAPI_proposal_v4.html>

> [3] https://www.w3.org/Bugs/**Public/show_bug.cgi?id=15861<https://www.w3.org/Bugs/Public/show_bug.cgi?id=15861>

>

>

Attachments

- image/png attachment: MediaStreamTracks.png

Received on Tuesday, 13 November 2012 22:34:19 UTC