- From: Frédéric Kayser <f.kayser@free.fr>

- Date: Tue, 17 Sep 2013 17:40:38 +0200

- To: public-respimg@w3.org

- Cc: Marcos Caceres <w3c@marcosc.com>

- Message-Id: <A161266D-CE44-4EBE-9320-9E86188F35B1@free.fr>

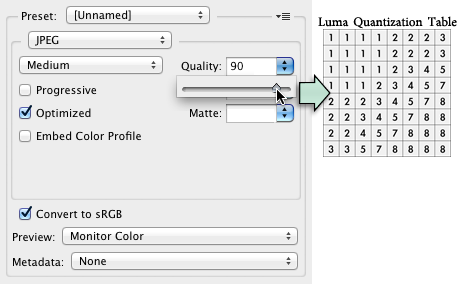

Hi, Image compression for Web Developers by Colt McAnlis is rather good. He overlooked some aspects of lossless compression, both PNG and WebP in lossless mode apply transformations (or filters) to the image, this preprocessing is done on a line per line basis in PNG and using a variable size grid in WebP, tools like pngwolf have been build to try to find the best filter for each raw. http://bjoern.hoehrmann.de/pngwolf/ Some of these transformations like the "Paeth predictor" are a bit more advanced than the usual delta on neighbor pixels, top notch or experimental lossless image compressors (BIM, BCIF…) more and more often resort to this type of prediction. About PNG optimization since he gave the name of a few tools, they can can be categorized in two families: "the old school" (Pngcrush and OptiPNG) they are based on the well-known libpng and zlib code, they do their job but don't expect the best results from those, "the in my own way" (AdvPNG, PNGOUT, ZopfliPNG) they use better but slower Deflate engines (respectively from 7-Zip, K-Zip and Zopfli) and the last two are also a bit more creative than libpng when it comes to filter picking. The most disturbing aspect I've found is that he openly wrote about "JPG Quality", if you read the JPEG specs: http://www.w3.org/Graphics/JPEG/itu-t81.pdf You'll find out that there are only two quantizations table given in annex K (one for luma the other for chroma) and there is no definition of such a thing as "JPG Quality". Since it's not written in stone in the specs each application processing JPEG images is actually free to use it's own quality scale and build its own quantization tables. That means that "Quality 70" in Adobe Photoshop Save for Web has nothing to do with quality 70% in ImageMagick or GIMP. Many applications rely on libjpeg from IJG and thus share the same quality scale, but Adobe Photoshop has definitely its own. Now even if you clearly state "quality lambda libjpeg scale" you should also precise which chroma subsampling type is in use, but it can be even be more tricky since luma and chroma quality can be set independently and tools like JPEGmini apparently build different (ad hoc) quantizations tables for each image. Regarding the unicorn animation supposed to illustrate JPEG progressive rendering: it's not the way it really works, Patrick Meenan's tool gives real snapshots of what's going on. Spectral selection will usually give way more detailed images in the second pass, what is shown with the unicorn is closer to PNG Adam7 interlaced mode. "This works by encoding a few extra versions of the image, at smaller resolutions which can be transferred faster to the user." is plain wrong, in fact it describes hierarchical JPEG (this is how "responsive" images were envisioned in 1992), a progressive JPEG holds the same data as a sequential JPEG it's only stored in a different order. In a sequential JPEG the quantized DCT matrices are entirely written in the file at once (the DC coefficient and the 63 AC coefficient go trough different steps but basically all the 64 coefficients are physically together in the file), In a progressive JPEG all the DC coefficients of all the quantized DCT matrices are written in a first scan (this translates into the 8x8 mosaic at the beginning of the rendering) the following scan holds one or more of the 63 AC coefficients ditto for the subsequent scans until all the AC coefficients have been written. I'll try to turn the Unicorn in real illustration of this process. I don't' know why he checked JPG as "Lossless", he's perhaps referring to the lossless scheme of the original JPEG that never gained a lot of popularity due to poor performances or may he anticipate JPEG-XT? Regards -- Frédéric Kayser Marcos Caceres wrote : > > I guess related - "Image Compression for Web Developers", published on the 17th of Sept: > > > http://www.html5rocks.com/en/tutorials/speed/img-compression/ > > > Frédéric, would be good also to hear your thoughts on the above article.

Attachments

- text/html attachment: stored

- image/png attachment: pshop2.png

Received on Tuesday, 17 September 2013 15:41:10 UTC